Giving AI Agents Database Access Is Way Harder Than It Looks

Imagine the world 12 months from now.

AI agents are everywhere and they are genuinely powerful. They write code, ship features, debug production issues, and quietly do half of the boring work nobody wanted to do anyway.

But there's a catch. Agents are only as smart as the world you give them. They can't reason about a system they can't see, and they can't act on data they don't have access to.

The model is the brain. You're the one who has to build the body and the room it lives in.

Which means the highest-leverage thing you can do right now is not picking the smartest model. It's building a sandbox where your agents can roam free and never break anything.

One of the most important parts of that sandbox is access to your databases. And it turns out doing that safely is extremely hard. This post is about why, and how I think about solving it.

So what could actually go wrong?

The first time I let an AI agent talk to a real database, I gave it a read-only user and called it a day. That felt safe for about two days.

Then I sat down and actually wrote out everything that could go wrong. The list was a lot longer than I expected.

The model writes something it shouldn't

A model can absolutely write a DELETE, wrap it in a comment trick, and slip it past a sloppy regex check. "Read-only" enforced in app code is one bad string match away from being not-read-only.

And once you start trusting the model's output, that gap is hard to see.

The model writes something "valid" that nukes your database

A "read-only" Postgres user can still fire off SELECT pg_sleep(3600) and quietly hold a connection open. Do that a few times and your pool dies, and your real app starts 500'ing for everyone.

Or the agent writes a 12-table cartesian join that returns half a billion rows. Nothing about that is technically illegal, but it'll OOM the box and take the database down for everyone sharing it.

The model reads something it shouldn't

A perfectly innocent-looking join across users and oauth_tokens can yank credentials your app never intended to expose. The query is valid SQL. The user has permission. The result is a security incident.

This one keeps me up at night because there's no syntax check that catches it.

Prompt injection from your own data

My personal favorite. A single row in your support_messages table contains a string that convinces the agent to query a different connection entirely.

The attacker isn't your user. The attacker is text someone wrote three months ago that's now sitting in your database.

The ones I didn't think of

And those are just the failures I thought of. The scary ones are always the ones I didn't.

Why one big check doesn't fix it

The deeper problem isn't any single missing check. It's that every layer you'd lean on can be defeated on its own.

Static SQL parsing can't catch dynamic patterns it doesn't know about. A read-only Postgres role won't stop a query from melting your CPU.

App-level timeouts don't fire if the network call hangs. Rate limits don't help if the one query that does make it through reads everything.

Any one of those alone is a paper wall. And you can't fix that by making one wall slightly thicker. You fix it by adding more walls.

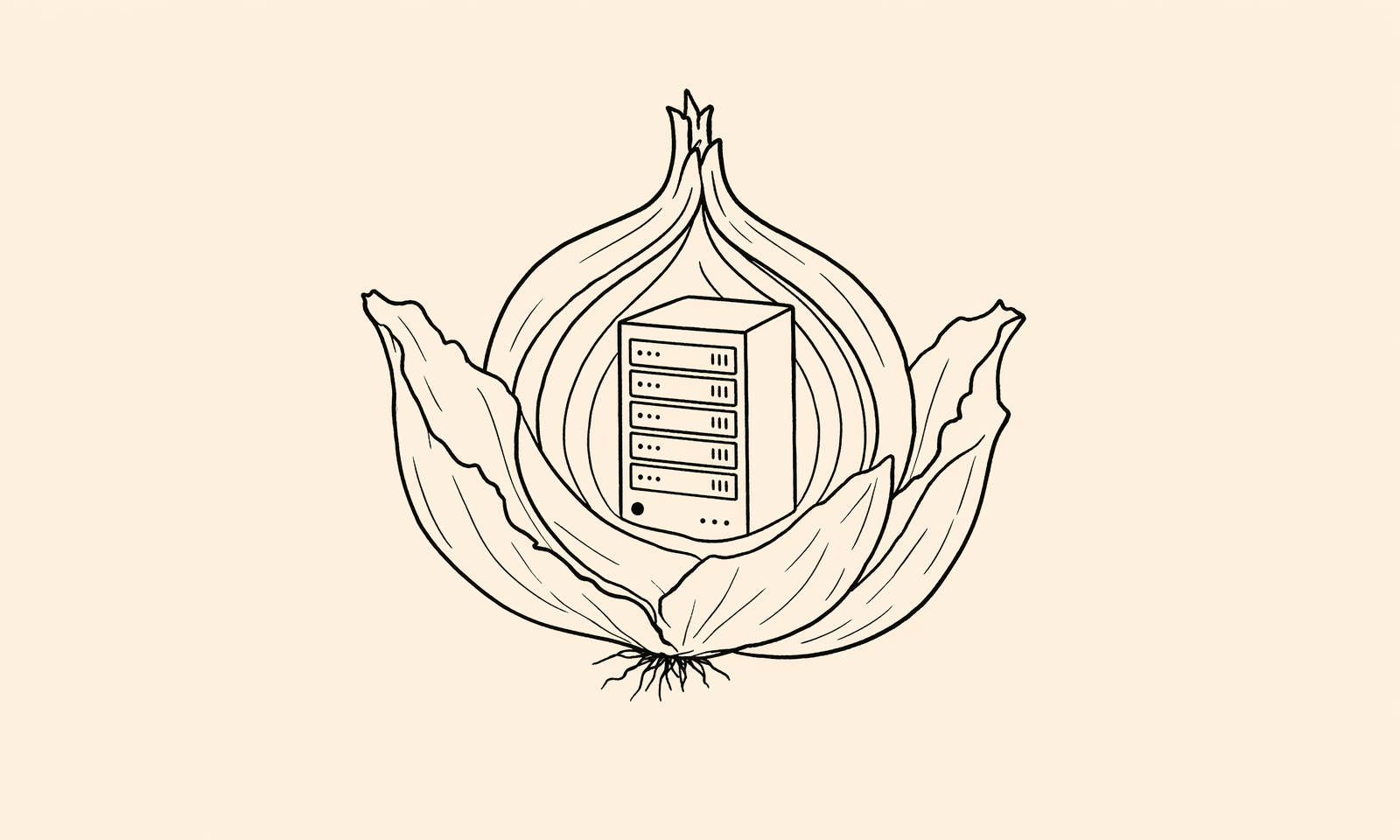

The fix is an onion

The mental model that finally clicked for me was an onion.

Start with "nothing works"

You start as restrictive as you possibly can. The default is no tables, no columns, no writes, no nothing.

Then you peel back exactly the capabilities the agent actually needs, and nothing else. Default-deny is honestly doing 90% of the work.

Add a layer for every realistic failure mode

The parser is a layer. The database-level read-only transaction is a layer. The statement timeout, the row limit, the column allowlist, the pre-execution cost estimator, the audit log.

Each one exists specifically because the layer in front of it can fail. That's the point. You're not building one perfect check. You're stacking imperfect ones on purpose.

Test it like someone is trying to break it

Not "happy path passes." Adversarial test cases. Prompt injection payloads pulled from real-world lists. Multi-statement attacks. Queries that look fine and aren't. Queries that look bad but are actually fine.

The whole point of an onion is to assume every layer will eventually be defeated, and to make sure the layer underneath it catches the fall.

If you only build the first layer, you've built a wall. If you build all of them, you've built a building.

Our onion

QueryBear's stack is built around exactly this idea. I won't go into every gory detail, but the layers we run today include:

- A SQL parser that strictly only allows what we expect.

- An allowlist of tables and columns the agent is actually permitted to see.

- AST-level rewriting so row limits and timeouts can't be bypassed by the agent.

- A pre-execution cost check that rejects queries that would scan too much.

- Database-level read-only transactions and statement timeouts as the hard backstop.

- A full audit log so we (and you) can replay everything later.

There are a few pieces I'm not putting in a blog post. Some of the secret sauce is in how the layers interact, and in how we test against attacks that haven't been invented yet.

This is the part of the product I'm most paranoid about, and honestly the part I'm most proud of.

If you take one thing from this

Don't trust a single guardrail. Stack them. Default-deny everything, and test it like someone is actively trying to break it.

Because eventually, someone will be.